What does it take for children to recognize a spoken word?

|

The process of understanding spoken words involves three main steps: 1) taking in auditory information; 2) breaking down this information into speech sounds; and 3) mapping meaning onto these speech sounds. Previous research suggests that CI-using children may differ from typically-hearing children in the final step – in how they associate meaning with spoken words, especially in contexts where there is unexpected information (Pierotti et al., 2021). Less is known about how CI-using children parse speech sounds into meaningful units, and how this may support the way they understand the meaning of speech. |

In what ways does the process of speech recognition differ between children with typical hearing and deaf children who hear through a cochlear implant (CI)? Is there a difference in how CI-using children and typical hearing children process rhymes and other speech sounds?

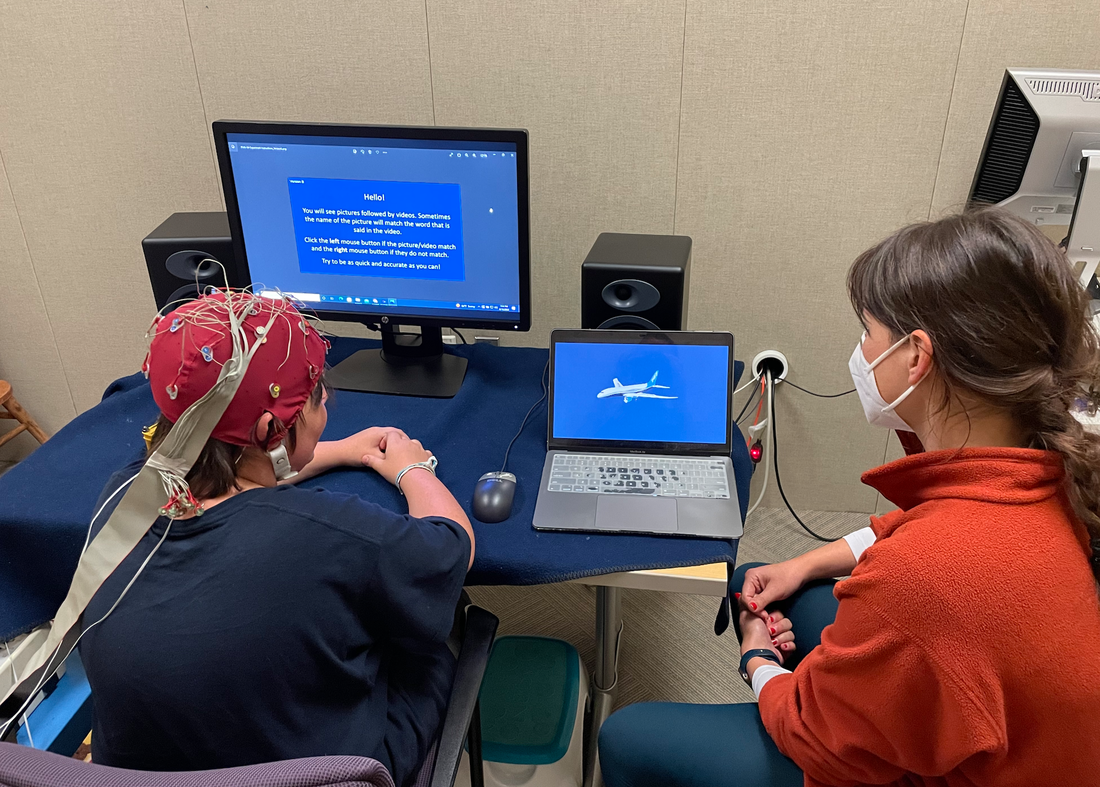

Our new study uses EEG to measure brain waves while children view pictures and videos of spoken words in order to study these questions. Brain activity that is recorded while children see pictures and hear speech can tell us more about the neural processes that support the three steps of speech recognition.

We are actively recruiting school aged children ages 8-14 years who are either typically-hearing or use a cochlear implant (CI). Requirements include that children are native English speakers, with no known language or learning impairments.

Testing takes place in a single 1-hour session at our lab at the Center for Mind and Brain in Davis, CA. All children participants are compensated with a toy/prize and parents of the CI-using children may receive additional compensation in the form of a gift card for travel costs. Please reach out to Staff Scientist Sharon Coffey-Corina with questions or to schedule a testing session: sccorina@ucdavis.edu, 530-754-4513.

References: